With COVID-19 shifting us all online, and as someone who has taught online (fully and partially), I have had a number of colleagues approach me with questions and ask advice. Though I don’t consider myself an “expert”, on online teaching, I can (and want to) share what I do know, and my own experiences, and I’m going to try to do that here.

Continue reading “Biology online/open-book exams, Part 1: general tips/considerations & examples”

Category: teaching & learning

UPDATED: 1st Pandemic book club meeting: Costa’s “99 Tips for Creating & Sustaining Educational Videos”

Results from the Doodle poll for a book club day/time to start discussing the newly published (and incredibly timely) book: “99 Tips for Creating & Sustaining Educational Videos”: the most popular time was Mon. May 4, 2-3 PM (Eastern Time).

Results from the Doodle poll for a book club day/time to start discussing the newly published (and incredibly timely) book: “99 Tips for Creating & Sustaining Educational Videos”: the most popular time was Mon. May 4, 2-3 PM (Eastern Time).

I’ve set up a Microsoft Teams meeting (and hope it will work for this). Teams is available for download on computer, phone, iPad: https://teams.microsoft.com/downloads . You can also access it through a browser: https://teams.microsoft.com/

Need the book?

It can be purchased:

– directly from Stylus, the publisher. The paperback and ePub versions are both on sale right now (along with other online ed books). Note that prices are in USD: https://styluspub.presswarehouse.com/landing/onlinelearning2020 (Outside the US, it will likely take some additional time to get the paperback.)

It’s a really new book, and Chapters/Indigo and Amazon don’t have the hard copy yet, but eBooks are available:

Kobo has the ePub format: https://www.kobo.com/ca/en/ebook/99-tips-for-creating-simple-and-sustainable-educational-videos (If buying in Canada, this is a few $ cheaper than the Stylus ePub, given the current exchange rate).

The Kindle version is available:

In Canada – CDN$: https://www.amazon.ca/Creating-Simple-Sustainable-Educational-Videos-ebook/dp/B086VW87QH/ref=sr_1_1?keywords=karen+costa&qid=1587391775&sr=8-1

US: https://www.amazon.com/Creating-Simple-Sustainable-Educational-Videos-ebook-dp-B086VW87QH/dp/B086VW87QH/ref=mt_kindle?_encoding=UTF8&me=&qid=

I’m looking forward to seeing/meeting other folks interested in this book (and making educational videos)!

Pandemic time higher ed book club

Having bought a few higher education related books just before or after The Great Shift to Remote, I found myself seeking some motivation to actually dig into them. I thought a little social interaction (and accountability) might be helpful, and I floated out a tweet asking if others might be interested in discussing a couple of these: Karen Costa‘s “99 Tips for Creating Simple and Sustainable Educational Videos” (at the time of writing on sale with some other online learning books) and Kevin Gannon‘s “Radical Hope: a Teaching Manifesto”. I was thrilled to see how many people responded!

Having bought a few higher education related books just before or after The Great Shift to Remote, I found myself seeking some motivation to actually dig into them. I thought a little social interaction (and accountability) might be helpful, and I floated out a tweet asking if others might be interested in discussing a couple of these: Karen Costa‘s “99 Tips for Creating Simple and Sustainable Educational Videos” (at the time of writing on sale with some other online learning books) and Kevin Gannon‘s “Radical Hope: a Teaching Manifesto”. I was thrilled to see how many people responded!

Conference season!

I haven’t written a blog post in a long time. Often, the regular academic year is pretty hectic, so I’m less likely in general to post here, but the past few months have been … unusual. (Like I have to point that out!) It’s been tough for me to justify taking the time and effort to write about teaching and learning stuff lately, as these topics seem almost frivolous, given unfolding world events. (As a Canadian living in the US, I’m still concerned about the current political and societal situation, and I may talk about that more later, but that’s another post … maybe.) However, life goes on, and as we get into conference season, it’s time to think (and talk!) about things beyond day-to-day teaching (and other concerns).

The Windsor-Oakland Teaching and Learning conference is always a great start to conference season for me, running early in May. This year, I was particularly impressed by the keynote from Peter Felten (@pfeltenNC): “Valuing Teaching: What Matters Most“. This led me to read the most recent book that he co-authored, The Undergraduate Experience (Jossey-Bass, 2016 – Indigo Chapters link). Like his keynote, the book is rich in providing clear information, backed up by evidence, with illustrative examples of positive change at various universities. I’ve read a few books centred on change in undergraduate education, which I’ve typically found to include advice that may not be realistic for my own school, or not feasible for someone to act upon who is not in upper administration. I actually felt like the Felten et al. book provided elements where I may be able to make a difference (beyond my regular goal of teaching and advising as well as I can, in my current position).

The annual oCUBE May UnConference is coming up very soon! This is the eighth one, and I’m happy to see that our grass-roots community of practise is still going strong. We are a group of individuals across various institutions, in a number of different roles, all interested in improving Biology education. (It started as an Ontario group, but we now have members from other provinces, and even in the USA!) The UnConference format seems to work very well for the kinds of discussions our group has, and having some new and different members contributing, along with a mixture of original and new members means that we get different perspectives and a diversity of interests and experience in our sessions. Our meeting location at Shamrock Lodge (near Port Carling, ON) is a lovely retreat away from our respective cities … and I personally think that the blueberry pancakes promote creativity and well-being! (The Shamrock Lodge folks treat us – and FEED us – very well.)

The annual conference for the Canadian Society of Microbiologists (CSM) is in June, in Waterloo, ON. The conference program looks great, and I’m excited to see Ed Yong (@EdYong209) and Jack Gilbert (@gilbertjacka) speak again. (Unabashed fangirl here!!!) Even more exciting, for me, is that we are holding our third pre-conference CSM FOME (Forum On Microbiology Education) workshop on June 20! Our keynote facilitator, Karen Smith (@DrMyth115), is a great microbiology educator and communicator, and our planning committee was faced with a challenge we had not faced in the previous two years – we have more workshop/presentation proposals than we can actually fit in our allotted time. CSM FOME co-chair Josie Libertucci (@Jos_Tucci) and I are thrilled to see how many people have registered for the workshop so far, and are looking forward to seeing this section grow in the CSM in future. (P.S. Is it rude to bring my copy of “I Contain Multitudes” to ask Ed Yong to sign it? What about getting a selfie with Ed and Jack?)

We also have the Western Conference on Science Education (WCSE) in July this summer (July 5-7). One of my favourite conferences, it runs every other year at Western University in London, ON, attracting high-quality workshops/presentations/posters, and opportunities to interact with highly engaged educators throughout the conference both in and out of conference activities. Early Registration for 2017 is still open at reduced rates until Friday, May 26th. If you’ve been to WCSE before, you can once again save $50 on your registration if you find a Newb (WCSE newbie!) to bring along (and if you’ve never been to WCSE, being a “Newb” with a previous WCSE-attendee will save you $50, too!).

It’s going to be a busy summer, and that’s a good antidote to some of the other stuff going on in the world right now. What are you looking forward to over the next few months?

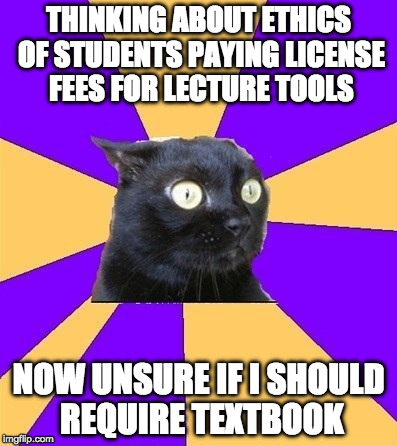

Ancillary fee anxiety

I had originally planned to write (and actually wrote a draft of) a post to explore my questions and concerns about asking students to pay for access to a web-based classroom response system (WBCRS henceforth), like Lecture Tools (now integrated into Echo 360), Top Hat, or Learning Catalytics. My major concern? These tools are basically ways to teach huge classes better, to bring in the interactivity and communication aspects difficult to achieve in the large class setting – kind of a “large class tax” on students. (I’ve used Lecture Tools for several terms – see my previous posts here, here, and here.)

I’d hoped to gain some clarity, maybe spark some conversation with colleagues about the issues relating to using a WBCRS at a cost to students. As part of my thinking, I considered some of the other ancillary items we routinely ask students to purchase (i.e., not usually included in their tuition, but required for a course). I was originally thinking that a teaching tool is really different from a required textbook, dissection kit, safety glasses, or a lab coat. Now I’m not only concerned about the ethics/fairness of asking students to purchase licenses for a WBCRS, but also requiring textbooks and disposable lab coats! Continue reading “Ancillary fee anxiety”

Tips from Tanya: Some points for students about technology in the classroom

I wrote this for my students (after Tamara Kelly and I facilitated a session on student devices in the classroom at the Western Conference on Science Education 2015) and am sharing it here, in hopes it may be of interest/use to others! Please note that my classroom policies about device use are specific to the courses I currently teach.

Almost everyone has a smartphone, laptop, tablet, or combination of these devices with them during their waking hours (and beyond, in some cases). There is huge potential for distraction using these devices – which is fine if you’re waiting in a long, boring line or on the bus, but can be problematic in the classroom*.

While a few profs ban these devices in their classes, I’m taking a different approach. In much of the world, including most work-places, these devices aren’t banned, and people are expected to be able to manage work/life and various distractions. That being said, I can understand why some instructors have different policies for their own classes.

Some of our in-class activities will make use of online resources, so I’ll encourage you to use them, if you wish to do so. I’ll be using LectureTools, which allows me to ask you questions that you can answer on your device … and for you to ask me questions in the system (without raising your hand).

If you don’t want to use a device in our class, that’s fine! One way to avoid distraction is to keep these devices out of sight (and hearing), and I’m happy to support those who take this approach. There will be alternative activities for students who don’t use the in-class system.

If you do want to use your device(s) in class, there are some things to be aware of:

Continue reading “Tips from Tanya: Some points for students about technology in the classroom”

BYOD … or bring me your questions! It’s all good.

About a year ago, I switched from using clickers in my classes to a web-based classroom response system (CRS) – Lecture Tools – where students bring their own internet-enabled devices (BYOD), as I’ve mentioned here before. After three terms, I am generally happy with the system as a replacement for clickers, and I’ll likely talk more about that later.

This is a rather rambly account of something small I tried that worked out. I’m hoping that it might be of use/interest to other folks (or, at least, maybe some of the references will be). Oh, and it has a bit of my philosophy on class attendance. (I’m sure you were curious!) Continue reading “BYOD … or bring me your questions! It’s all good.”

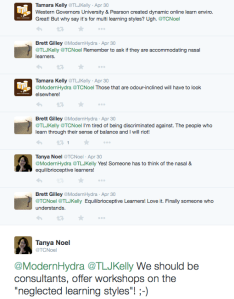

The tenacious myth of preferred learning styles

Learning styles (the idea we each have a preferred style, such as visual or auditory, and that those should be catered to for effective learning) are a myth. This shouldn’t need to be said again. Other people have said it well. (You can skip below for a list of references.)

But it’s a tenacious, popular myth. I understand how attractive the idea is … when I was a neophyte graduate student in a TA training workshop, I remember the satisfaction of completing a learning styles inventory (like this: http://www.personal.psu.edu/bxb11/LSI/LSI.htm & this: http://www.learning-styles-online.com/inventory/ & this: http://www.educationplanner.org/students/self-assessments/learning-styles.shtml & I really need to stop because this is just irritating me …) and figuring out that I was a “kinaesthetic” learner. Of course! Of course, I was a science grad student, and this made sense! We do experiments! I learn by doing! (I didn’t think about the fact that I could probably have found a rationale for being a “visual” learner …) It was an easy way for me to think about my learning! And to justify why I didn’t perform so well in some courses … those ones were not tailored to my learning style! (Woe to those poor nasal learners … )

That was back in 1994.

Now there is ample evidence that teaching towards preferred learning styles does not seem to actually help people learn. Even trying to reliably categorize people into preferred learning styles is fraught with issues. Meanwhile, many teachers/professors and students waste time and energy on this, efforts they could be directing elsewhere. (Check out the book “Make It Stick: The Science of Successful Learning” by Brown, Roediger and McDaniel for a good overview of what we DO know about teaching/learning based on recent cognitive science research.)

Continue reading “The tenacious myth of preferred learning styles”

Experiential Food Education

In the last couple of days, my most popular tweets have involved science-y food items:

Both of these images were brought to my attention by a couple of the smart, young women I am lucky to know (Fatima and Renee), and judging from the number of favourites and re-tweets, the images seemed to be appreciated by many of the folks who follow me (and their followers). Food does seem to be a really good way to get people’s attention and engagement! Continue reading “Experiential Food Education”

More evidence of benefits from increased course structure

Sarah L. Eddy and Kelly A. Hogan (2014) recently published a paper “Getting Under the Hood: How and for Whom Does Increasing Course Structure Work?”, a nice example of the next wave of discipline-based educational research (DBER) that goes beyond asking “Does active learning work?” to explore details of how active learning interventions actually work, and differential impacts on sub-populations of students. Here, Eddy and Hogan describe their results of a study based on the work led by Scott Freeman at the University of Washington (see Freeman et al. 2011, Haak et al. 2011).

Continue reading “More evidence of benefits from increased course structure”